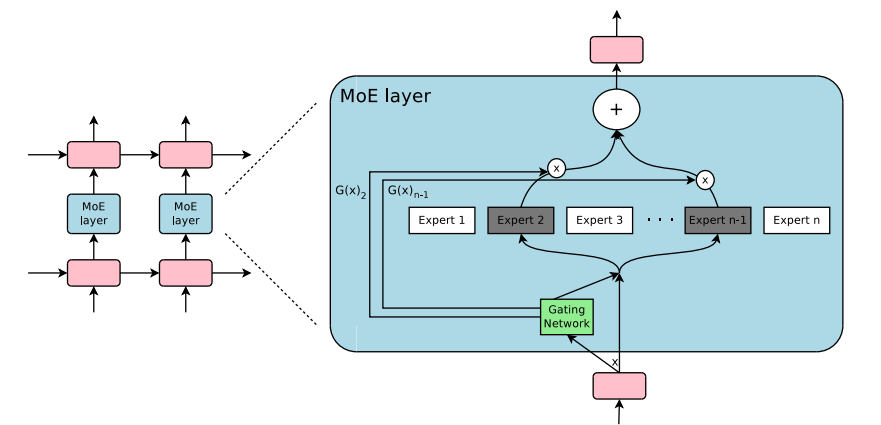

MoE中的稀疏

不“怎么”增加模型计算开销的情况下,提升模型的体积

MoE结构示例

-

一种"weighted multiplication"

- 其中

- 其中

-

-

为了体现稀疏性,一般选Top-k Gating

- 通常

- 通常

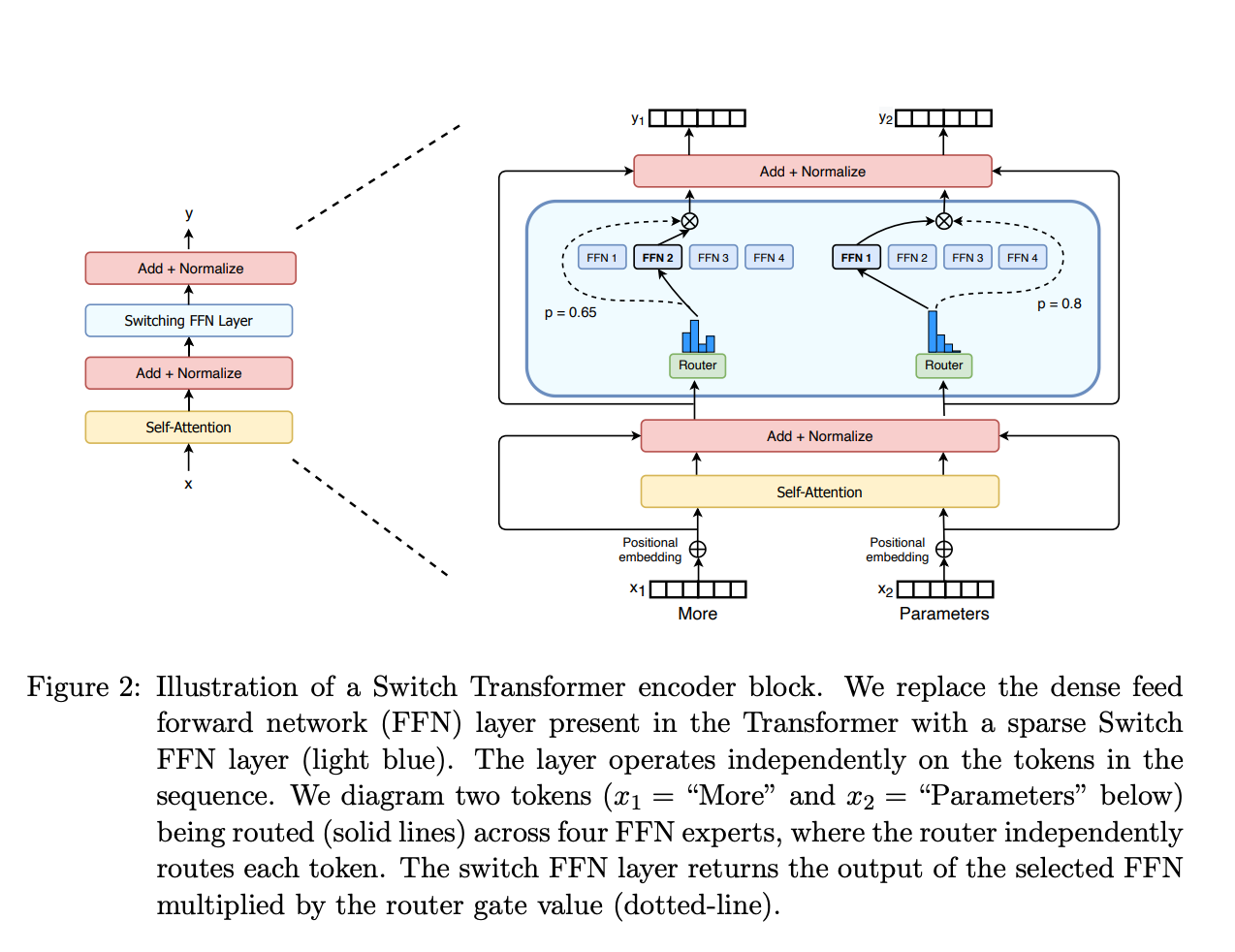

MoE与Transformers

Switch Transformers将Transformers中的FFN替换为MoE结构

MoE与Transformers

- Switch Transformers

- T5-based MoEs going from 8 to 2048 experts

- Mixtral 8x7B

- 8 experts

如何确定MoE中的expert

- 通过训练过程学习参数,确定每个expert

- 以Mixtral 8x7B为例

- 将FFN layer扩展为8个expert

- 每个expert为原有尺寸的一个FFN layer

- 通过Gating network选择最适合的expert

- 将FFN layer扩展为8个expert

前馈神经网络(FFN)

标准FFN实现

(mlp): LlamaMLP(

(gate_proj): Linear(in_features=2048, out_features=8192, bias=False)

(up_proj): Linear(in_features=2048, out_features=8192, bias=False)

(down_proj): Linear(in_features=8192, out_features=2048, bias=False)

(act_fn): SiLU()

)

- 增加Gating network,forward function中通过Gating network选择最适合的expert,每个expert进行以下计算:

- self.down_proj(self.act_fn(self.gate_proj(x)) * self.up_proj(x)

MoE的训练

- 通过load balancing训练MoE

- MoE训练之前:初始化每个expert,且并无任何含义

- 不加任何控制的训练:每次选取top-k(=2)的expert进行训练和参数更新,容易使模型选择被训练的快的experts

- load balancing: 在训练过程中,赋予每个expert近乎相同数量的训练样本

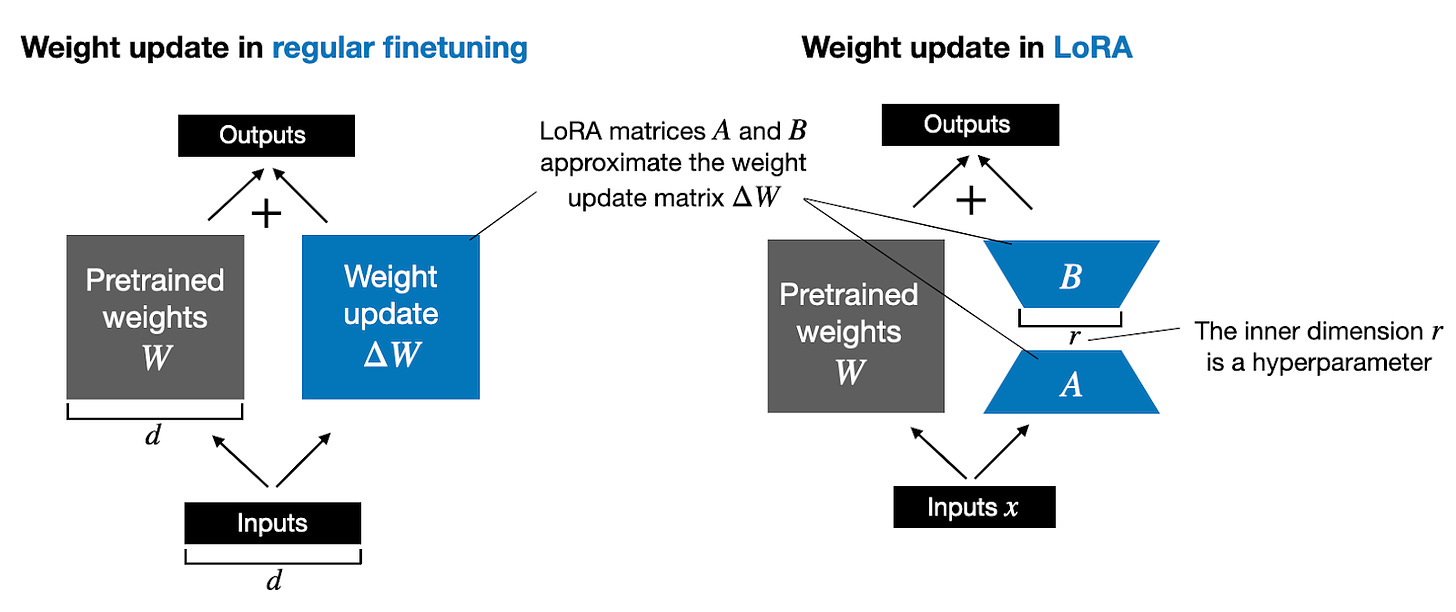

Low-rank adaptation (LoRA)

- 一种流行的轻量级LLM微调技术

- 通过很少的trainable parameters,快速微调LLM

LoRA基本思路

- 传统的training和finetuning

- LoRA

LoRA推理

- 传统的training和finetuning后的推理过程

- LoRA推理

LoRA实现

import torch.nn as nn

class LoRALayer(nn.Module):

def __init__(self, in_dim, out_dim, rank, alpha):

super().__init__()

std_dev = 1 / torch.sqrt(torch.tensor(rank).float())

self.A = nn.Parameter(torch.randn(in_dim, rank) * std_dev)

self.B = nn.Parameter(torch.zeros(rank, out_dim))

self.alpha = alpha

def forward(self, x):

x = self.alpha * (x @ self.A @ self.B)

return x

LoRA实现

class LinearWithLoRA(nn.Module):

def __init__(self, linear, rank, alpha):

super().__init__()

self.linear = linear

self.lora = LoRALayer(

linear.in_features, linear.out_features, rank, alpha

)

def forward(self, x):

return self.linear(x) + self.lora(x)

移步notebook

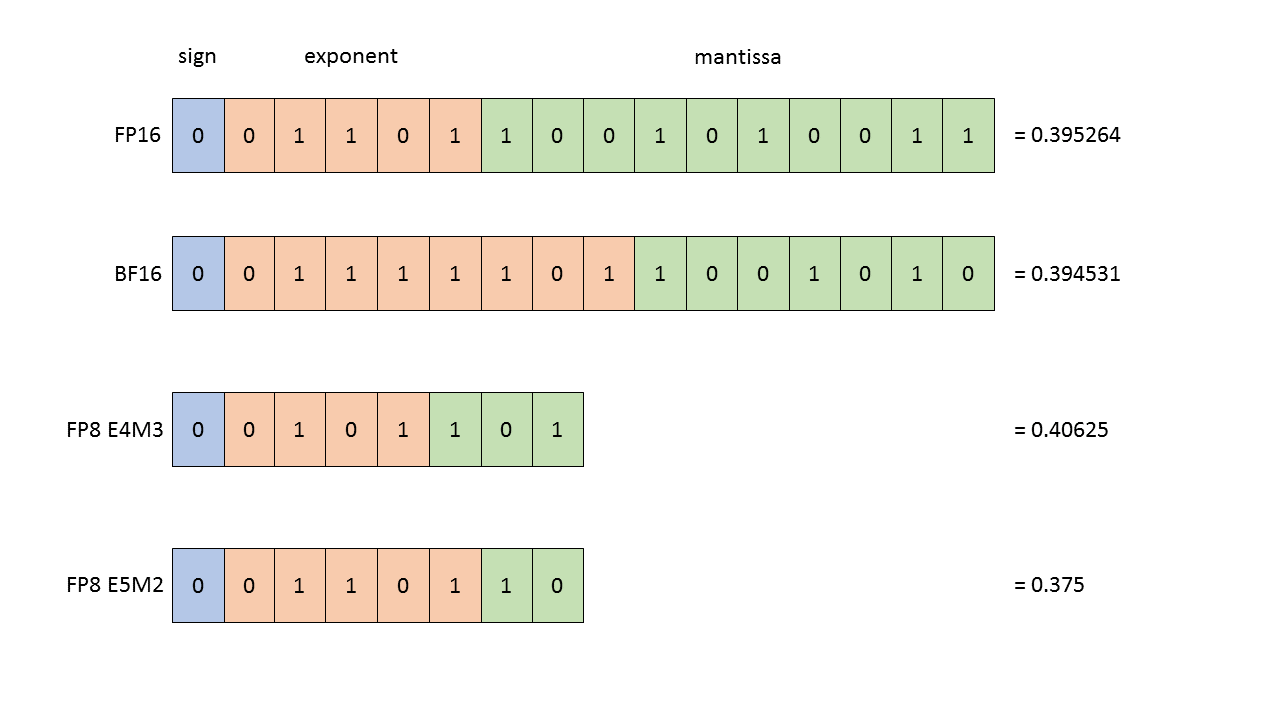

浮点数表示

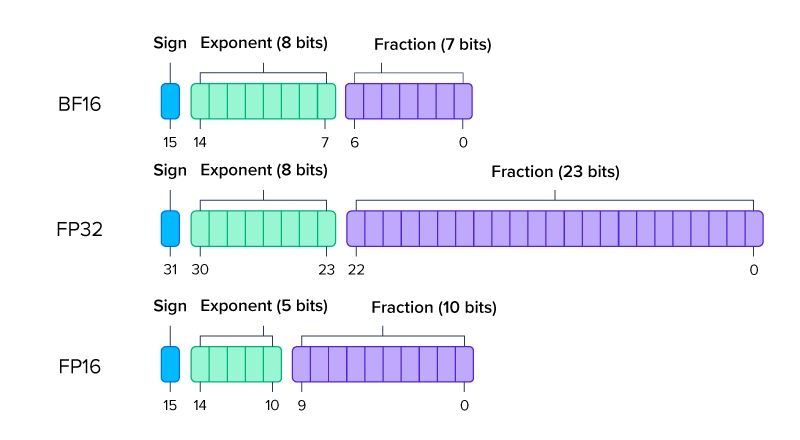

- 浮点数,又称floating point,是一种有符号的二进制数,考虑其精度(Precision),通常有:

- 双精度: FP64

- 单精度: FP32

- 半精度: FP16

浮点数表示

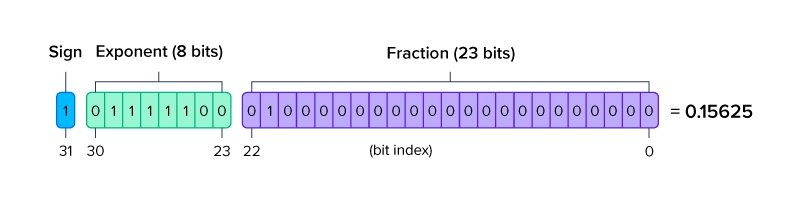

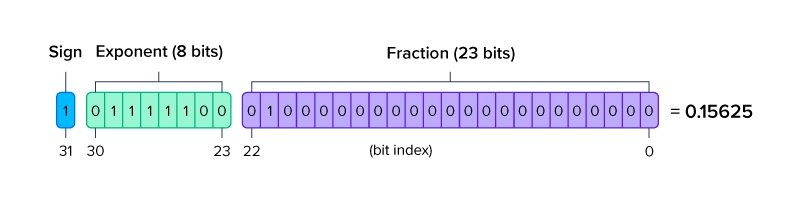

- 以FP32为例:

- 符号位: 1位 sign

- exponent部分: 8位 exponent

- fraction部分: 23位 fraction

浮点数表示

浮点数表示